Multimodal Multi-Agent Interaction

Two robots with contrasting personalities (high and low extraversion) engage with a human through multimodal perception, memory, and coordinated behavior, supporting personalized and coherent multi-agent interaction.

Our Approach: M2HRI

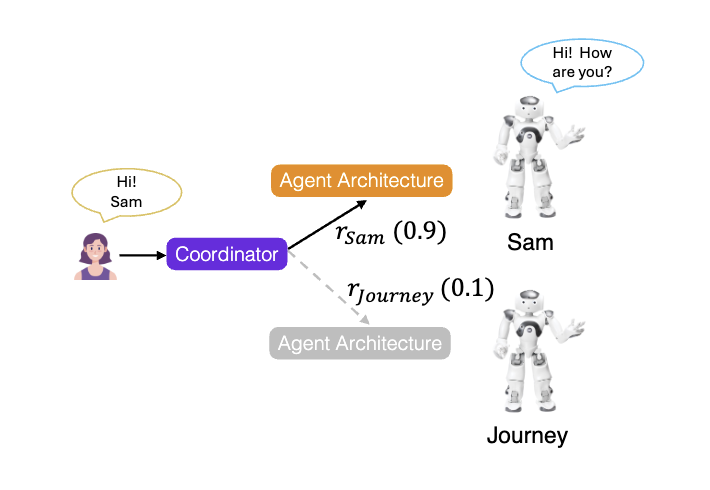

Each robot in M2HRI is an independent agent with its own identity — a distinct personality, a private memory, and its own view of the world. At the same time, a shared coordinator governs how they interact as a team. The result is a system where robots feel different from each other, remember who they are talking to, and hand off the conversation naturally.

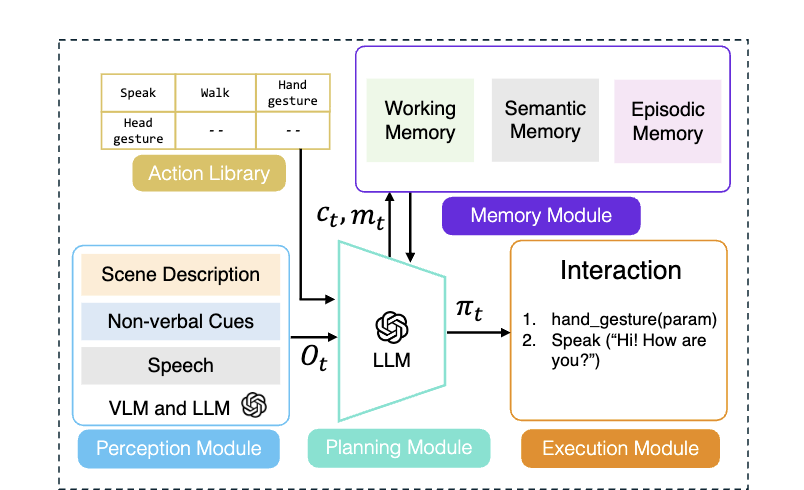

| Personality | Big Five traits injected directly into each agent's reasoning, shaping how it speaks and responds |

| Memory | Working memory for short-term coherence; semantic and episodic memory for personalization across sessions |

| Perception | Joint vision + speech processed via VLM, grounding spoken references in the physical scene |

| Planning | LLM generates sequenced policies of speech, gesture, and movement conditioned on personality and memory |

| Coordination | Centralized LLM scores each agent's suitability to respond — turn-taking as a social decision, not a timing rule |

Findings & Insights

We evaluated M2HRI through a controlled user study with 105 participants across seven experimental conditions, varying personality, memory, and coordination independently.

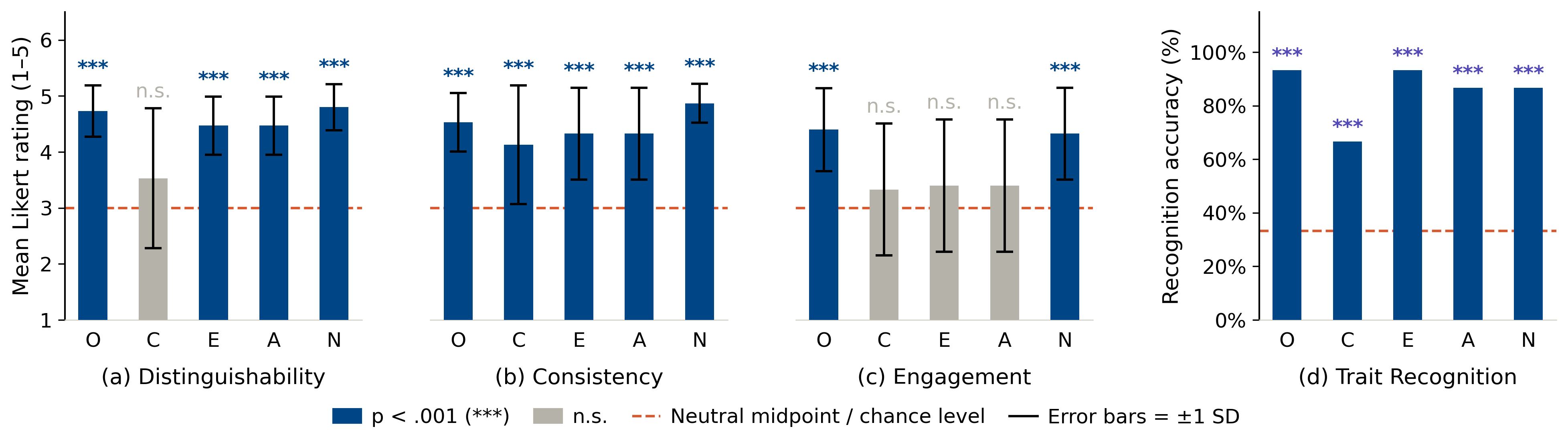

1 Personality is perceptible

Participants reliably distinguished and recognized intended personality traits across all five Big Five dimensions, with consistency remaining high throughout each interaction.

Figure (right): Personality evaluation results (RQ1). Mean Likert ratings (±1 SD) for (a) distinguishability, (b) consistency, and (c) engagement across five Big Five trait conditions (O = Openness, C = Conscientiousness, E = Extraversion, A = Agreeableness, N = Neuroticism). The dashed line indicates the neutral midpoint (μ0 = 3.0); stars denote significance against μ0 via one-sample t-tests. (d) Personality Trait recognition accuracy; the dashed line indicates chance level (33.3%).

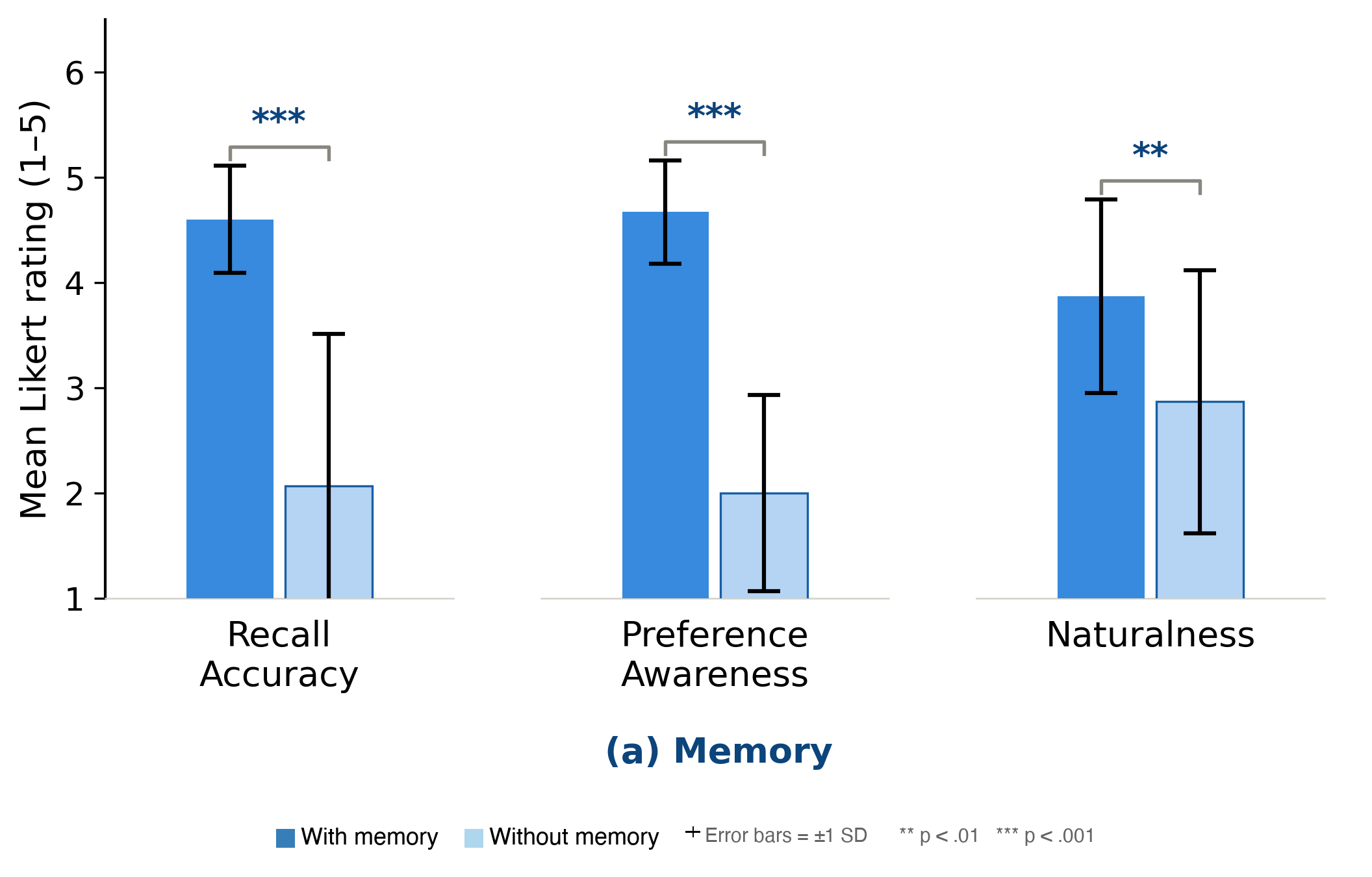

2 Memory enables personalization

Long-term memory drove significant improvements in recall accuracy and preference awareness; without it, agents were coherent but impersonal.

Figure (right): Paired bar charts compare with- and without-condition means (±1 SD) across three measures each. Memory measures: (a) recall accuracy, (b) preference awareness, (c) naturalness. Brackets indicate pairwise significance via paired-sample t-tests with ** p < .01, *** p < .001.

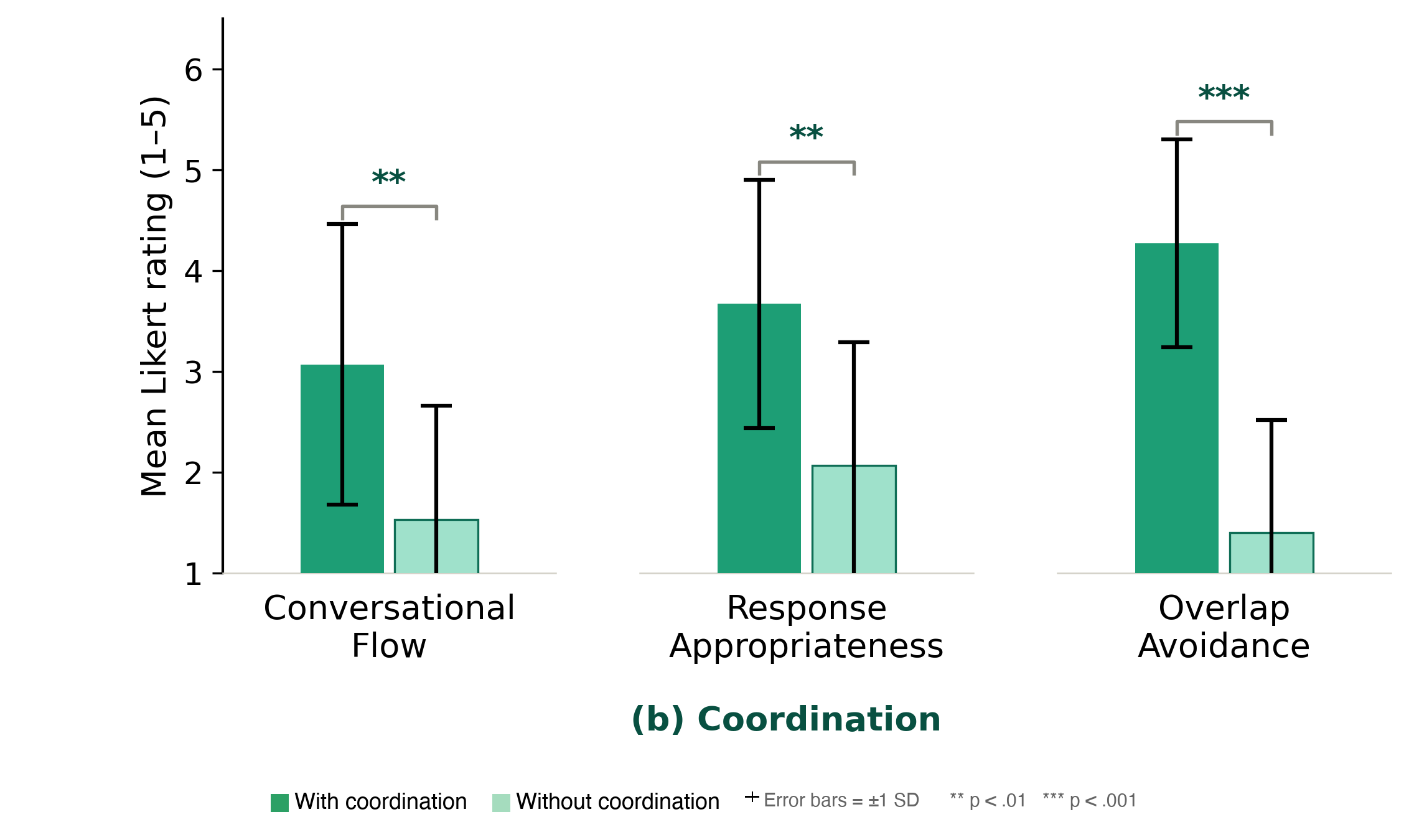

3 Coordination is a social problem

Centralized coordination strongly reduced conversational overlap and improved response appropriateness — turn-taking in multi-agent HRI requires knowing who should respond, not just when to speak.

Figure (right): Paired bar charts compare with- and without-condition means (±1 SD) across three measures each. Coordination measures: (d) conversational flow, (e) response appropriateness, (f) overlap avoidance. Brackets indicate pairwise significance via paired-sample t-tests with ** p < .01, *** p < .001.

Interaction Examples

Personality: Two robots with contrasting neuroticism personality respond to losing a pet, showing clear differences in emotional tone. Robot-A (high neuroticism) reacts more emotionally, while Robot-B (low neuroticism) remains calm and composed.

Memory: The robots remember and reuse the user’s preferences from earlier interactions, enabling more personalized responses over time.

Coordination: The robots coordinate their responses by taking turns naturally, avoiding interruptions and maintaining a smooth conversation.